Bots Are Terrible at Recognizing Black Faces. Let's Keep it That Way.

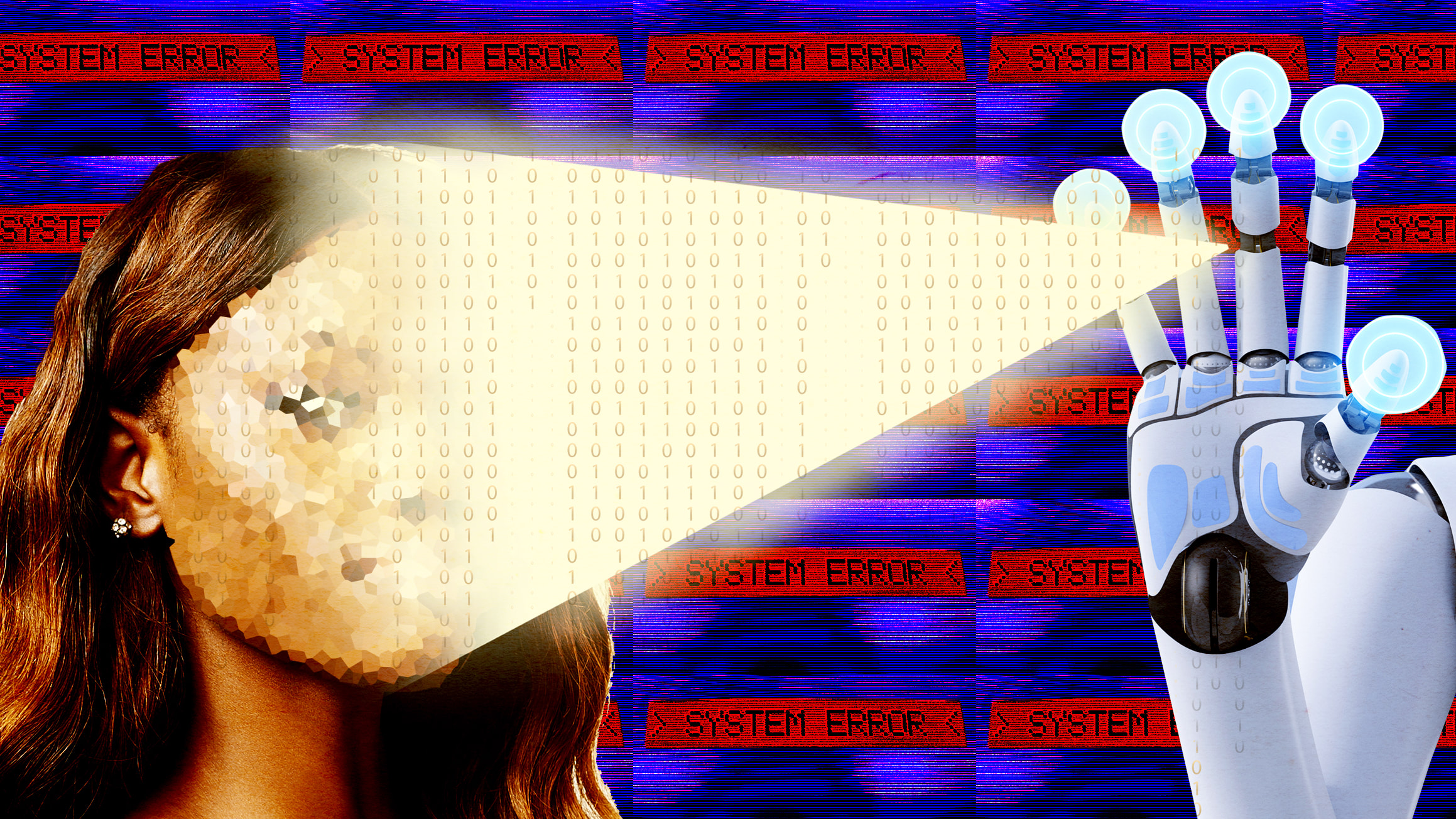

Photo Illustration by Lyne Lucien/The Daily Beast

Color photography was optimized for light skin. Facial recognition technologies are just carrying on color film’s historical non-registry of black people.

In a country where crime prevention already associates blackness with inherent criminality, why would we fight to make our faces more legible to a system designed to police us?

I haven’t watched too many dystopia science fiction movies, but I have seen more than one where the protagonists attempt to somehow obscure their identity from the police and their surveillance systems. Why? We cheer for the protagonists’ attempts to conceal their identities and evade, thwart, and undermine a system tracking, recording, and potentially penalizing, their every move. We believe that people deserve some kind of right to privacy, and so we celebrate the heroic journey of the individual fighting to free herself and others.

In those filmscapes, it’s an advantage of sorts to have a face that’s illegible to the facial recognition apparatus. In reality, that’s not how it usually works. The failure of cameras (and even soap dispensers!) to properly identify or respond to black people is attributed to but not actually reflective of technological error or even algorithmic bias. Rather, it points to a deeply entrenched white supremacist worldview of who is classified as fully “human.”

The popular argument for helping improve facial recognition, and particularly its issues with black faces, is that it would mean fewer “wrongful arrests.” The London Metropolitan Police’s trials of automated facial recognition software, for example, yielded false-positive identifications a staggering 98.1 percent of the time. Amazon’s facial recognition tool, Rekognition, which American law enforcement agencies use today and is particularly bad at positively identifying dark skinned women, mistakenly connected 28 sitting members of Congress (including six members of the Congressional Black Caucus) to pictures from mugshots last year. So, the argument goes, we need systems that are able to see and distinguish us, and the positive modification of these systems is indicative of a step towards a more equitable world.

This is a well-meaning consideration, but one based on what may be the misguided hope of being able to reform from within a pipelined preschool-to-prison carceral system in which black people are regularly arrested and detained for “fitting the description.” Black people are functionally interchangeable and indistinguishable and not afforded the privilege of individuality for a reason. The purpose of the world’s largest prison system—the United States— is less about arresting the right people in service of protecting public safety as it is about the creation and maintenance of a giant labor pool for individuals and companies invested in the material structure of the system.

Automated facial recognition software perpetuates the racialized sight (or lack thereof) that plagued conventional cameras as colonialism, per Alexander Weheliye’s definition, helped divide and “discipline humanity into full humans, not-quite-humans, and nonhumans.” Light-skinned Europeans set the template for the “human,” in explicit opposition to the brown-skinned Africans demoted to not-quite humans and nonhumans through missionary conversion attempts and the transatlantic slave trade.

In her book Dark Matters: On the Surveillance of Blackness, Simone Brown describes the racial origins and evolutions of Jeremy Bentham’s panopticon, an institution of social control and surveillance where individuals are watched without ever knowing whether they are being watched or where the watcher is located. Bentham envisioned it while traveling aboard a ship with “18 young Negresses” who’d been enslaved and held “under the hatches.” The contemporary surveillance structure cannot be delinked from the ensnaring and dehumanizing of black people.

Brown also refers to Didier Bigo’s recent coinage of the “banopticon,” a portmanteau of “ban” and “panopticon,” to describe technology-mediated systems of surveillance that assess individuals based on perceptions and designations of risk. Foundational is the idea of citizenship and assignments of “risks” to a nation-state (the “illegal immigrant”) or even a neighborhood (the “dangerous minority”). The euphemistic idea of “public safety” is always racialized, always defined by threats posed to a genteel white Christian “public.”

Photographer is my life.

Camera technology preceded and differs from automated facial recognition technology and artificial intelligence, but we must first understand how color photography was optimized for light skin. Kodak had a near-monopoly on color film in the 1950s, and the lighting for shadows and skin tones was calibrated using a “Shirley card,” named for the white female employee who served as the original card model. (A similar template was used for lighting and coloring in filmmaking where the similarly porcelain-skinned women were called “China girls,” though they were, of course, not Chinese.) Steve McQueen’s 12 Years a Slave is a master class in the sophisticated lighting of dark skin, which often appeared distorted or featureless in early films. McQueen described seeing Sidney Poitier sweating alongside Rod Steiger in In the Heat of the Night: he’s sweating not only because it was hot in the places where they were filming, but because “he had tons of light thrown on him, because the film stock wasn’t sensitive enough for black skin.”

The use of harsh light was subsequently adopted by the apartheid government in South Africa. When it would photograph black natives for passbooks (passport-like documents the state used to track and control black movement), it used the Polaroid ID-2 camera systems that boosted the flash by 42 percent to match the additional light that darker black skin absorbs.

That camera may have also been an attempt by Polaroid to make inroads on Kodak’s largest clients in the confectionery and furniture industries, who complained that dark chocolate and dark furniture were being poorly photographed. But photographer Adam Broomberg believes Polaroid designed the ID-2 camera expressly for these surveilling purposes, as he explored in his 2013 co-curated exhibition “To Photograph the Details of a Dark Horse in Low Light.” For the show, Broomberg and his collaborator Oliver Chanarin documented Bwiti initiation rites in Gabon using ID-2 systems and left over Kodak film stock that had expired in the 1970s. They sought to visualize this technologically prejudicial capturing of non-whiteness, offering the question of whether these racial prejudices might be inherent to the medium. In her series on unlearning photography, scholar Ariella Azoulay writes that we should “imagine that the origins of photography go back to 1492” so that the ability to “discover new worlds” through film should be coupled with other colonalist claims, as the camera was and continues to be an imperial weapon.

As a photographer, I see facial recognition as an extension of color film’s historical non-registry of black people. Facial legibility has significant implications for how black people are literally seen, understood, and engaged in the world. It is not simply an algorithmic error that Google’s image-labeling misidentified black people as gorillas (which the company corrected by simply blocking the algorithm from identifying gorillas, chimpanzees, and other primates at all and claiming to be “appalled and genuinely sorry” for the remaining kinks in its image-recognition abilities rather than openly engage with why the error had been made).

This is not simply a problem of “racist code” that can be fixed with diversity initiatives to add more black coders and software engineers to correct technical deficiencies within an unchanged structure technology. The problem is that black people are simply not human enough to be recognized by the racist technology created by racist people imbued with racist worldviews. We are, to these algorithms and racists alike, still literally monkeys.

The technocrats who say and even believe that artificial intelligence and automation will redeem us have already opened Pandora’s Box. Black people rushing to help those technocrats are assisting in refining the tools of the American surveillance state and helping them become more sophisticated. While there are certainly tremendous benefits to be had from automation, (it can, for example, can be useful for disabled and elderly people with limited mobility or memory issues that make household tasks difficult), it is not inherently good or even neutral; nor is it a part of some natural or inevitable technological progression within human existence.

Just as Barack Obama’s presidency taught us that blackness leading empire does not amount to social progress, it is not social progress to make black people equally visible to software that will inevitably be further weaponized against us. We are considered criminal and more surveillable by orders of magnitude; whatever claim to a right to privacy that we may have is diminished by a state that believes that we must always be watched and seen.

The tech industry is enthusiastically supporting the state’s mandate to surveil. Any facilitation in improving its accuracy and efficiency in that support is, at best, a pyrrhic victory.