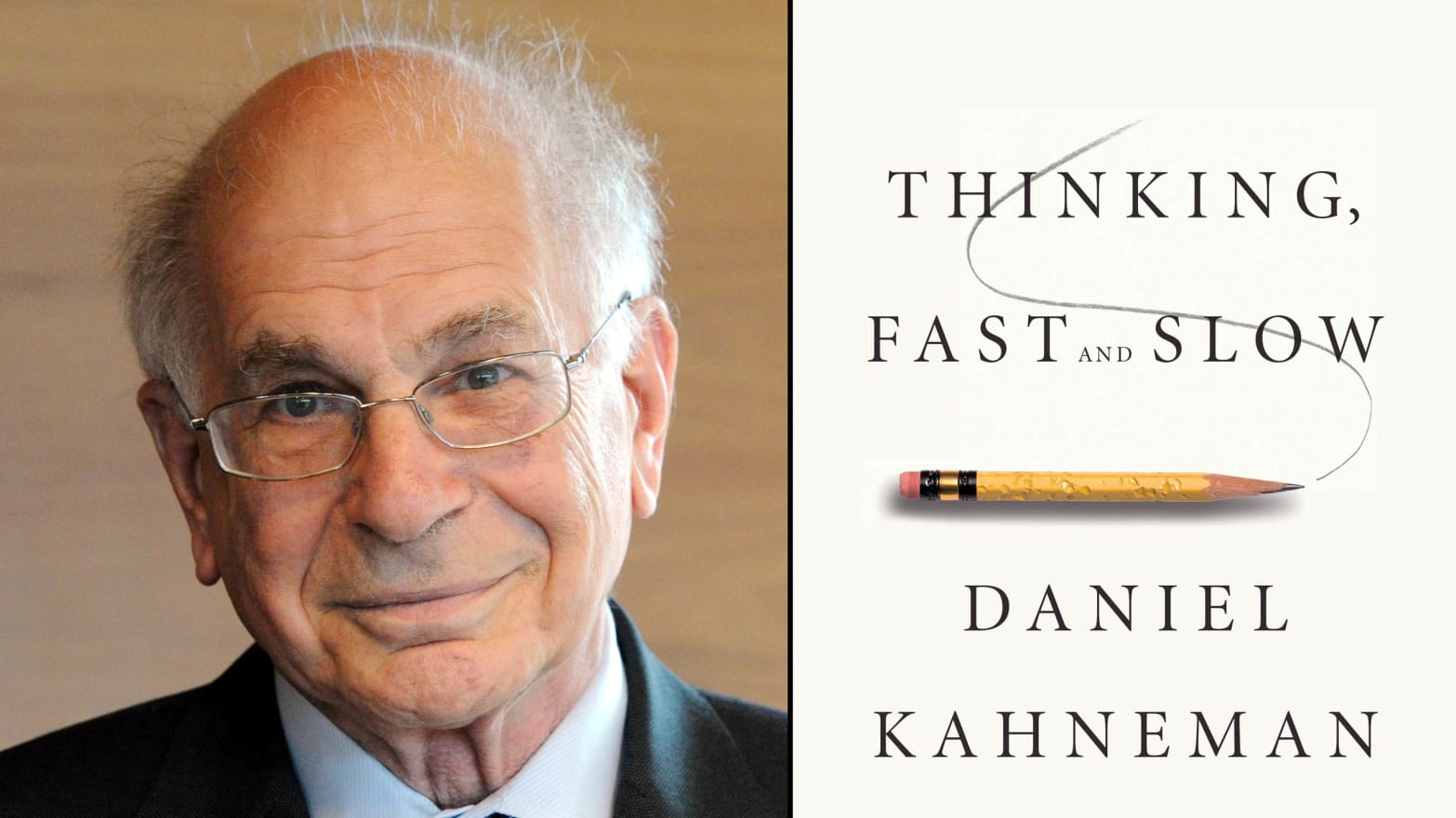

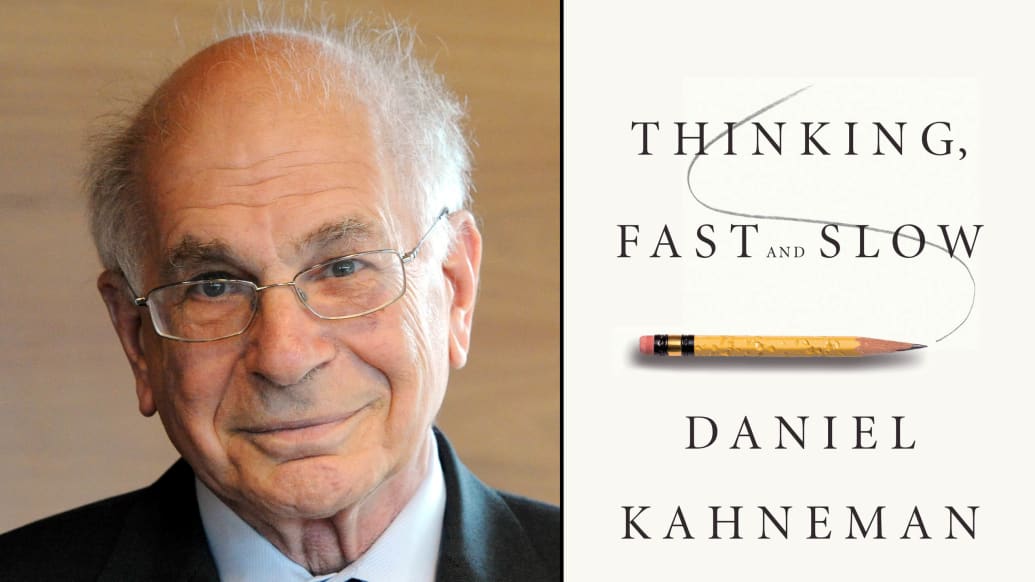

If you’re looking to better understand your own brain and only have time to read one book on the subject, it should be Daniel Kahneman’s Thinking, Fast and Slow, now out in paperback. Kahneman, a psychologist at Princeton University who won the 2002 Nobel Prize for economics for work that he and longtime collaborator Amos Tversky did on the psychology of decision making, gives a detailed, comprehensive account of how our cognitive processes are divided into fast (“System 1”) and slow (“System 2”) thinking. System 1 handles basic tasks and calculations like walking, breathing, and determining the value of 1 + 1, while System 2 takes on more complicated, abstract decision making and calculations like 435 x 23. Many of our mistakes, Kahneman writes, come when exhaustion or other factors cause System 1 to do jobs better suited for System 2.

In a recent email interview, Kahneman explains the dangers of overconfidence, why economists are still at the top of the policy-making pecking order, and his good-natured gripe with the term “behavioral economics.”

It seems like overconfidence is one of the big targets of Thinking, Fast and Slow. Unfortunately, there’s some evidence that people are more drawn to those who exhibit this tendency, even when it isn’t warranted (such as political prognosticators). How do we get around our ingrained tendencies to be attracted to those who loudly proclaim easy answers?

This is most difficult where it matters the most, in running a democracy. People like leaders who look like they are dominant, optimistic, friendly to their friends, and quick on the trigger when it comes to enemies. They like boldness and despise the appearance of timidity and protracted doubt. Here, the hope for the selection of qualified leaders is in serious and critical media, but the incentives of popular media favor mirroring the preferences of the public, however misguided.

Prospects are quite a bit better for the selection of good leaders in organizations. In business enterprises as well as in politics, the more assertive and confident individuals have a big advantage, especially if they are also lucky and achieve a few early successes. But organizations are better placed to evaluate people by substantive achievements and by their contributions to the conversation. They can apply slow thinking to the selection of leaders, and they should.

Do you see any resistance to the ideas in Thinking, Fast and Slow from people who don’t want to acknowledge how error-prone the human brain can be under certain circumstances?

Amos Tversky and I encountered this kind of resistance to our early work, which was focused mostly on errors of judgment, rather than on intelligent performance. Some people chose to infer that we believed humans to be feeble-minded, which we never did. Thinking, Fast and Slow is explicit in offering a view of the mind that deals with marvels as well as flaws, and it has largely escaped the criticism that it is biased against human nature. However, I have encountered some people, especially in the field of finance, who can easily think of individuals (often themselves, sometimes Warren Buffett) who understand the world with far more precision than my book suggests anyone can.

What has it been like winning the Nobel Prize and seeing your work explode in popularity without Amos Tversky around to share these experiences with you?

The work was already quite well known before Amos’s death, and he knew before he died that we had been nominated for the Nobel and were quite likely to get it eventually. For him, the Nobel was one of many things that he was going to miss by dying at 59, and certainly not the most important. As for me, I never forget that the recognition I get is for work that was done by a successful team of which I was lucky to be a member.

It’s a complicated question, but what is the simplest, most straightforward advice you’d give to someone who wants to make sure their System 2 isn’t ceding certain important decisions and calculations to System 1?

Not really a complicated question because the answers are not surprising. Slow down, sleep on it, and ask your most brutal and least empathetic close friends for their advice. Friends are sometimes a big help when they share your feelings. In the context of decisions, the friends who will serve you best are those who understand your feelings but are not overly impressed by them. For example, one important source of bad decisions is loss aversion, by which we put far more weight on what we may lose than on what we may gain. Advisors are likely to give us advice in which gains and losses are treated more neutrally—they are more likely to adopt a broad and long-term view of our problem, less likely than the affected individual to be swayed by the fears and hopes of the moment.

You note in the foreword to the recently released The Behavioral Foundations of Public Policy that economists have a “monopoly” on policy making, that “Like it or not, it is a fact of life that economics is the only social science that is generally recognized as relevant and useful by policy makers.” Why is that?

Policy makers, like most people, normally feel that they already know all the psychology and all the sociology they are likely to need for their decisions. I don’t think they are right, but that’s the way it is. On the other hand, people who have not studied economics are fully aware of their ignorance. The use of mathematics adds a touch of magic to economics. Indeed it makes perfect sense for economists to be the interpreters of policy-relevant research, because they understand and are trained to use big data. This, and the fact that policies always involve tradeoffs and almost always involve money, explains the dominant role of economics in policy.

Something else has happened in recent years that is amusing, but also frustrating for psychologists. When it comes to policy making, applications of social or cognitive psychology are now routinely labeled behavioral economics. The “culprits” in the appropriation of my discipline are two of my best friends, Richard Thaler and Cass Sunstein. Their joint masterpiece Nudge is rich in policy recommendations that apply psychology to problems—sometimes common-sense psychology, sometimes the scientific kind. Indeed, there is far more psychology than economics in Nudge. But because one of the authors of Nudge is the guru of behavioral economics, the book immediately became the public definition of behavioral economics. The consequence is that psychologists applying their field to policy issues are now seen as doing behavioral economics. As a result, they are almost forced to accept the label of behavioral economists, even if they are as innocent of economic knowledge as I am. Furthermore, these psychologists are rewarded by greater attention to their ideas, because they benefit from the higher credibility that comes to credentialed economists.

Obviously you (and Eldar Shafir, and other researchers) are hoping to change this, to have psychology better represented at the policy-making table. Where do you think Thinking, Fast and Slow has fit into this effort, and what’s next in the ongoing attempt to weave psychological findings more tightly into public policy?

The intrepid readers who get close to the end of my long tome will find an enthusiastic endorsement of the policy applications that have come under the label of behavioral economics. I am very optimistic about the future of that work, which is characterized by achieving medium-sized gains by nano-sized investments. But I hope that the work will eventually be recognized for what it is and relabeled. In the U.K., for example, there is a unit doing that work at 10 Downing Street. Its informal name is “the Nudge Unit” and its formal name is the “Behavioural Insights Team.” It is headed by a psychologist. The value of proper labeling is that good psychologists are more likely to be drawn to participate in efforts that explicitly recognize their discipline.

What’s so powerful about the rational actor model? Do you think economists will ever willingly give up those parts of it which should be discarded?

Think of the kind of market that Adam Smith described. You can get a lot of insight into how just the right amount of bread gets to London in the morning by assuming that the baker and the other participants in the market pursue their own interests in a sensible manner. The rational-agent model takes this idea to its logical extreme. If you want to predict the behavior of a market, you are best off assuming individual agents who act in a way that is predictable and fairly simple—for example by assuming that the participants are similarly motivated and exploit all their opportunities. I am not an economist, but I find it hard to imagine that they will ever give up the use of schematic individual agents, even if they endow these agents with a little more realistic psychology. And I see no reason why they should.

The rational agent model has more questionable consequences in the domain of policy because the assumption that individuals are rational in the pursuit of their interests has an ideological coloring and policy implications that many would view as unfortunate. If individuals are rational, there is no need to protect them against their own choices. At the extreme, no need for Social Security or for laws that compel motorcycle riders to wear helmets. It is not an accident that the department of economics at the University of Chicago, one of the most illustrious in the world, is known both for its adherence to a strict version of the rational actor model and for very conservative politics.

It seems as though there are many areas in which economists and psychologists are vying for the same public-policy space. How do you get around the fact that economists can produce elegant models, nifty graphs, and the like (even when the underlying ideas aren’t sturdy), but psychology research isn’t always quite so easy to present to policy makers in a shiny, impressive-looking way?

You should not play down psychologists’ ability to turn out nifty Power Point slides. More seriously, I see much more collaboration than competition between psychologists and economists in the domain of policy, and my only quibble is with the label. I would like them all, when they collaborate, to call themselves behavioral scientists. The synergy is evident in policy books such as Cass Sunstein’s Simpler and the forthcoming Scarcity: Why Having Too Little Means So Much, by Sendhil Mullainathan and Eldar Shafir which deals with both the economics and the psychology of poverty.

Editor's note: A previous version of this article incorrectly identified the University of Chicago as Chicago University. The post has since been updated.