Do you really know if that pro-Trump meme or far-right tweet is from a real person, or just an unmanned string of code that was purchased for pennies?

Although bots—automated accounts on social media—are certainly not a new phenomenon, they have a renewed political significance. Part of the Russian government’s well-documented interference in the 2016 U.S. election included pushing deliberately divisive messages through social platforms.

Investigators found that hundreds or thousands of dodgy Twitter accounts with Russian digital fingerprints posted anti-Clinton tweets that frequently contained false information.

A Daily Beast investigation reveals manipulating Twitter is cheap. Really cheap. A review of dodgy marketing companies, botnet owners, and underground forums shows plenty of people are willing to sell the various components needed to run your own political Twitter army for just a few hundred dollars, or sometimes less.

And once your botnet is caught, due to holes in Twitter’s security and sign-up features, it takes only a few minutes to do it all over again.

“It requires very little computing power to fire this stuff out,” Maxim Goncharov, a senior threat researcher at cybersecurity firm Trend Micro who has studied the trade of social media bots, told The Daily Beast.

The crossover between companies offering services to promote brands and those selling Twitter accounts to criminals highlights a constant struggle throughout the Twitter bot industry: everything is murky.

“There’s a very large scale of grey here,” Goncharov, said. With the bot economy, it’s “really hard to put your finger on it and say, this is where we’ve crossed the line from legitimate to shady,” he added.

***

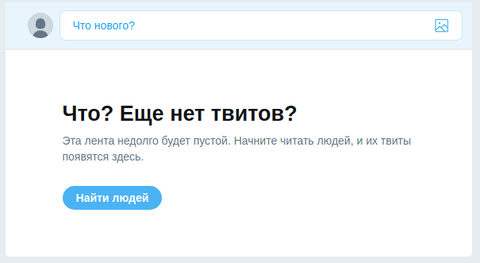

One Russian and English language site called Buy Accs offers 1,000 Twitter accounts for $45. To test the service, The Daily Beast bought 1,000 accounts with the pseudo-anonymous digital currency bitcoin.

The Russian accounts are as plain as can be: no avatars, no tweets, and no followers, and date from July and August of this year. But they work, come with functioning email accounts, and can be used to tweet without immediately raising any alarm bells at Twitter. Delivery of the accounts is near instant, and is done largely automatically, with no direct human interaction required.

Account vendors will, though, face obstacles as they build up their own bank of users to sell. Making accounts in some sort of automated fashion, by using a script to quickly churn through Twitter’s sign up process, may trigger the social network’s fraud alarms and block the accounts, meaning at least some of the process is likely to be manual.

“All accounts we have are created with bots or manually. We never sold compromised, stolen or other illegally obtained accounts,” a customer service representative from Buy Accs told The Daily Beast. “Regular accounts are created with CAPTCHA recogniztion (sic), manual or automatic.”

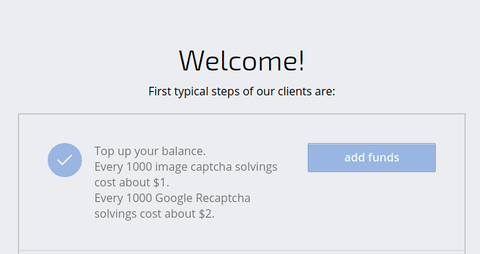

Fortunately, paying someone to fill in pesky CAPTCHAs—those prompts that ask you to type in a series of characters, or click on all of the vehicles in an image—is exceptionally cheap.

“One hundred percent of captchas are solved by human workers from around the world. This is why by using our service you help thousands of people to feed themselves and their families,” the website for one such service, called anti-captcha.com, reads, although perhaps the benevolent outlook is a bit disingenuous.

To solve Google’s more advanced CAPTCHAs, the site charges around $2 per 1000 solutions, payable in everything from credit cards, PayPal, to bitcoin.

And the service works for creating Twitter accounts. Security researcher Fionnbharr Davies told The Daily Beast he spent $10 at anti-captcha.com when constructing his own Twitter botnet.

Other Twitter account sellers focus on years-old “aged” accounts. Typically, accounts with longer lifespans might be more valuable: if an account is older, and did not just spring to existence yesterday, Twitter may be less likely to flag the user as fraudulent, and it could be harder to spot that the account is in fact part of a bot army. That can be useful when trying to pass off your politics-spewing bots as real people.

“These accounts are highly sustainable,” a post on Black Hat World, a forum whose users are focused on a mix of techniques and products for online marketers, reads.

For $150, budding bot-herders can buy 1,000 “vintage” accounts, dating from between 2008 and 2013. Another post advertises 500 accounts for $100, and a third post offers so-called PVAs—phone verified accounts—meaning its user has a better chance of avoiding spam filters. The customer support rep from Buy Accs said their company’s PVAs are created manually either with virtual phone numbers or physical SIM cards.

Years-old accounts appeared in Twitter bot attacks recently. In August thousands of automated accounts turned on bot researchers from The Atlantic Council’s Digital Forensic Research Lab (DFR Lab). At least some of those bots dated back to 2013, and seemingly tried to harass targets by retweeting certain posts en masse, clogging up their victim’s notification feeds.

In one case, it appeared the wave of activity caused Twitter to temporarily lock this reporter’s own account. For those sorts of attacks, The Daily Beast found one Twitter botnet owner on a Russian crime forum selling 100 retweets for 14 rubles, or around 24 cents.

“Everything will be fine,” one customer support representative for the botnet owner told The Daily Beast, when asked if Twitter would ban a user for buying retweets. The service also separately advertises on a more legitimate-looking marketing site.

But for bots focused on political manipulation, the overwhelming number of accounts might be more of a priority than their quality.

“The history is less significant; what they’re looking for is sheer volume to get something up in trending, to trigger Twitter’s natural algorithms, that will then promote content,” Goncharov, the threat researcher, said. Even if Twitter bans offending accounts fairly quickly, it might not matter to the bot-herder, as long as their message gets out.

“The typical pattern of traffic is a very rapid acceleration—from a few tweets a minute to hundreds of tweets a minute—a short-term spike, and then an equally rapid collapse in traffic as the bots turn to other subjects,” Ben Nimmo, a senior researcher at DFR Lab who has followed political bot campaigns, told The Daily Beast,

In order to turn the accounts into a botnet, though, its creator needs a way to command the users simultaneously. Again, plenty of options exist, many designed for grey online marketers. For just over $250, Tweet Attacks Pro lets users command an “unlimited” number of accounts, according to the product’s website.

Other pieces of software such as Twidium offer much of the same capabilities, and allow a botnet owner to post tweets, follow other accounts, or do pretty much anything else with a collection of accounts all at once. (In The Daily Beast’s tests, Twidium relies on Twitter’s API, which in turn needs accounts to be linked to a phone number).

Botnet owners likely need to use proxies too—extra servers to route their traffic through—so Twitter doesn’t simply block a swarm of connects all suspiciously connecting from the same place. Plenty of the same marketing or criminal forums offering Twitter accounts naturally also advertise proxies.

On the Russian underground site offering retweets for cheap, one vendor sells 25,000 to 45,000 proxies spread around the world for $100 a week. It costs $800 a week for ‘exclusive’ servers; meaning you are the only customer using them.

“You need to login accounts from [a] private IP for long lasting,” another Twitter account seller on Black Hat World, who went by the name Glenn Maxwell, told The Daily Beast. The software that controls the accounts also typically provides options for quickly configuring different bots to use proxies.

Although it’s tricky to know exactly how specific Twitter botnets operate, data breaches show a bit more of what happens behind the scenes. A security researcher known as Zodiac provided The Daily Beast with copy of an apparent bot database they found on an exposed server. The data contains usernames, passwords, and email addresses, mostly from the free mail.ru service, for just over 100 bots which software can then use to log into Twitter, but the database also includes logs of the bots’ activity.

“Your tweet to [user] has been sent!” one of the hundreds of log messages reads.

Another exposed database, this time for a Twitter bot spreading links to dodgy dating sites, acted as a store of images, chat-up lines, and profile bios. Whether a bot needed a persona to assume, or a woman to impersonate, it seemingly connected to this server and pulled what images it needed.

“They will have a pool of images that they’ve harvested from either stock sites, from other user profiles, or from other social networks,” Goncharov said.

Twitter declined to speak on the record about its anti-bot measures, but a spokesperson pointed to a previously published blog post from the company.

“It’s worth noting that in order to respond to this challenge efficiently and to ensure people cannot circumvent these safeguards, we’re unable to share the details of these internal signals in our public API,” Colin Crowell, Twitter’s VP of public policy, government and philanthropy wrote in June.

Of course, having a network of Twitter accounts is one thing, but being able to use them effectively to push a certain political message is another issue entirely—whether that’s pushing Trump merchandise, spreading a leak of French government emails, or targeting researchers and journalists.

“Bot herders normally do this by trying to push their message (often a hashtag) into the ‘Trending’ lists. Some groups do this very effectively, preparing stocks of memes in advance, coordinating their posts and using bots to generate thousands of tweets an hour,” Nimmo from DFR Lab said. “We’ve seen many cases of this succeeding in France, Germany and the US, for example.”

“Ironically, botnets have the most effect in spreading their messages when real people or media quote them,” he added.

Getting hold of everything you need to run a political botnet, though, is easy. You don’t need to be a hacker to figure it out.

“It is very simple,” Goncharov said.