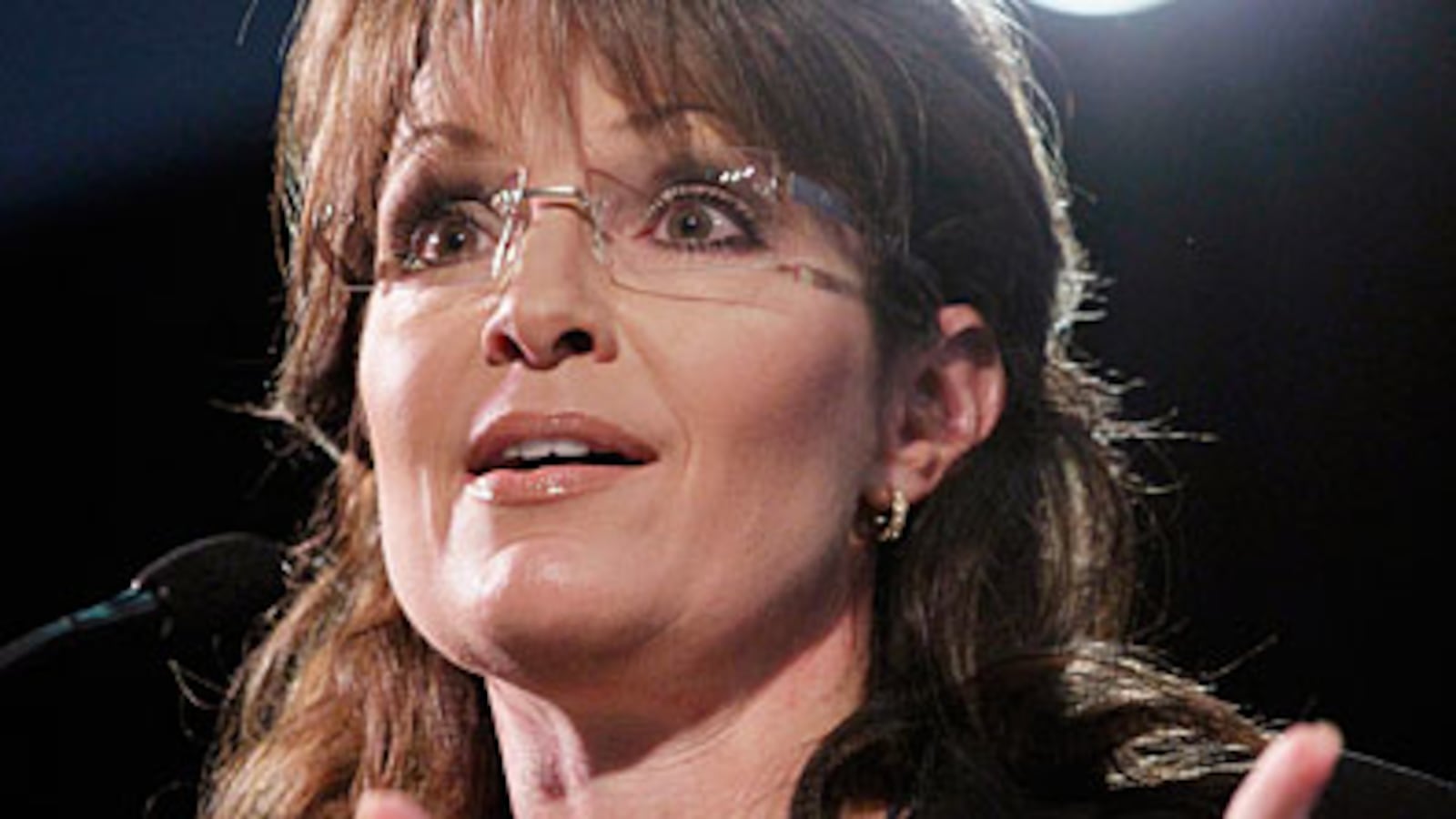

Sometime Thursday morning I realized Sarah Palin's controversial Facebook note about the "Ground Zero Mosque" had vanished. In its place was an error message that explained the network "could not find the note you requested." It had "either been deleted” or had never existed in the first place.

In fact, the post had been removed by Facebook's automated systems, according to a spokesman, the result of a grassroots campaign by Tumblr users to report the former governor's post as a "hate speech." It was an idea introduced by a Tumblr blogger the media has identified as "moneyries." Hello everyone, that's me. And soon I was inundated with messages from her fervent fans.

Those of us following Palin's comments in the media throughout the past week or so would know that the former governor of Alaska had issued a screed on Facebook, her preferred form of communication these days, outlining the reasons she opposed the construction of an Islamic community center just a few blocks from Ground Zero.

The original note, titled "An Intolerable Mistake on Hallowed Ground," was a response to comments made by New York City Mayor Michael Bloomberg in which he argued it's un-American to "keep people from building a building," especially in a city that stands for "tolerance and openness." It made me wonder where Facebook, a government unto itself with a user base larger than the U.S. population, would outline the lines of offensive material under their Terms of Service when one's simply preaching "common moral sense," as Palin would call it. Or, if its own laws on censorship and free speech could rely on its users to characterize user content as intolerant, or in this case, “hate speech,” themselves.

The truth is, many Internet communities lack any real transparency when it comes to governing the words of its users. Facebook's free speech policies are largely undefined, and it took the coordinated actions of a community a fraction of its size to prove it.

That all began when I decided to conduct a little experiment.

On Facebook, down beneath the original post, right there next to the time stamp, lies a hyperlink that reads, "Report Note." On Wednesday morning, I clicked it. In a pop-up box that appeared next, I was warned, "You are about to report a violation of the Statement of Rights and Responsibilities. All reports are strictly confidential." (Ha! Not if I blog about it, Zuckerberg!) My required reason, I gave, was the content in the "note text." My report type, also required, would be "Racist/Hate Speech," as it seemed this note, more so than any others, specifically aimed to restrict the religious actions of a specific group of people. The robots at Facebook would probably agree. The other report type options—Advertisement/Spam, Threatens Me or Others, Targets Me or a Friend, and Sexually Explicit Language—didn't apply anyways.

I snapped a screenshot and posted it to Tumblr with a call to arms: "Tumblr: Help Report Sarah Palin’s Ground Zero mosque note to Facebook for being 'Racist/Hate Speech.' Click-through to do it." I didn't expect much—maybe a couple dozen notes and a message from a Facebook employee following up on the complaint. "Hello," he might have said. "I saw you reported a post by our popular human writer Sarah Palin. Did you have any specific complaints about the content? Otherwise, move along, nothing to see here, thanks for calling."

That Facebook communication never came, and in the meantime, the click-throughs on Tumblr did. Less than 24 hours after I published the first post, it had pretty much "popped." In a grassroots campaign that's part- 4chanian, part-Orwellian, hundreds of users of the community blogging platform Tumblr had shared my post, offered their own commentary, and logged onto Facebook on Wednesday to report Palin's note as hate speech. After enough user reports, it appeared that Facebook's robots had automatically pulled the plug on the post without consulting any humans within the company's ranks.

Facebook's free speech policies are largely undefined, and it took the coordinated actions of a community a fraction of its size to prove it.

Before long, Ben Smith of Politico was reporting “Neither Facebook nor Palin's camp was immediately sure what happened,” and asking if I was "gaming Facebook to suppress free speech," or if it's "really hate speech that shouldn't be allowed on there." I told him that, " refudiating" Palin wasn't my intent but rather it was "a social experiment to explore the boundaries of Facebook’s government-like Terms and Conditions and the power of the Tumblr community."

From there, the story spread like wildfire. CNET's The Social wrote, "A controversial, religiously charged post on conservative political figurehead Sarah Palin's official Facebook page has disappeared—and a grassroots campaign to have it pulled from the social network may be responsible." Allah Pundit at the conservative power-blog Hot Air wrote he was "surprised because the people responsible for this can’t seriously have believed that Facebook would pull the plug on a post written by the one person who, more than any other, has made FB politically relevant." In apologizing to Palin, Facebook spokesman Andrew Noyes told CNN, "The note in question did not violate our content standards but was removed by an automated system," and that they're "always working to improve our processes and we apologize for any inconvenience this caused."

Since the network pulled the post, the former governor has been fairly silent. The only indication that her ship had been rocked was a tweet ( Re-posted: "An Intolerable Mistake on Hallowed Ground") and an addendum to the original post that read, "The original post of this statement (on July 20, 2010) was somehow unintentionally deleted by mistake or technical glitch."

But in her silence, her supporters awoke. I began getting inexplicable Facebook messages from individuals so clearly outside my "social web," tweets from users with unidentifiable avatars (a trend in itself, for sure), and blog comments calling me a "liberal scumbag censor," "the typical Islamist," "Liberal Goosestepping Fascist," and a "Dishonest Fraud."

Most of the complaints from the conservative side of the blogosphere were calling my actions, those representing "The Left," of course, as "straight from the liberals’ playbook." I had probably gleaned this idea from Journolist! I had done the predictable, and with a blogger's whistle had summoned the armies of espresso-wielding drones to destroy the First Amendment. But I think they're missing the point. Sure, people who disagree with Sarah Palin's entrance into New York City politics may want to see her diatribes simply go away. Others may want their Facebook newsfeeds free of breastfeeding women, those "I Hate Muslims" Facebook groups or persons who identify as holocaust deniers—the latter two have been sticky issues for Facebook before.

But whether or not Facebook had a right to publish such content needs to be a decision for Facebook administrative policy hacks, not us, as we users exist under Zuckerberg's non-democratically developed Terms and Conditions. The internet's still largely defining just what the concept of "free speech" online entails, and at this point, while it's our party, the parents are still upstairs.

Moving forward, as Facebook modifies their "processes" and finds a way to help users identify real circumstances that violate the terms of service, they'll have to merge the services of its automated systems with the oversight that only a human can provide.

The Machines, we've learned here, are equally incapable of identifying the lines between political opinion and what some may view as hate speech, especially when their robotic egos are egged on by a tightly connected Internet community bent on keeping Palin out of New York, and perhaps even, offline.

Brian Ries is a Philly-born senior editor at FREEwilliamsburg.com and social media strategist at Attention. He lives in Brooklyn.