The warning sounded dire: Sex traffickers were placing zip ties on cars of people they hoped to kidnap. Within days of the claim appearing in a TikTok video, the post had racked up more than 700,000 likes, many of them from scared teens. Every one of them was playing into a hoax: The zip-tie scare was a previously debunked rumor that found new life on the video-sharing app.

2016 was the year hoaxes like the Pizzagate conspiracy theory took over Facebook and Twitter. Since then, a new social media powerhouse has emerged: TikTok, a video app with 1.5 billion global users. With the 2020 election looming, TikTok is about to face its own disinformation reckoning.

While some of the platform’s design makes it hard for some hoaxes to spread, researchers say, the app’s structure and opaque moderation system can warp users’ ideas of the news, spreading half-truths or keeping some topics out of the public eye altogether.

Even if the worst happens, it won’t look quite like 2016, when the Pizzagate conspiracy theory racked up countless shares across mainstream social media. Followers of the right-wing hoax falsely claimed that Hillary Clinton was involved in a child sex-trafficking ring based out of a D.C. pizzeria. And platforms like Facebook and Twitter made it easy for users to repost each others’ bizarre claims.

That exact scenario couldn’t happen on TikTok.

Unlike Facebook and Twitter, which allow users to share others’ posts, TikTok has no equivalent of a “retweet” button. Instead it requires users to make their own, original posts. That means every user’s profile is a collection of their own work—and it creates a natural barrier to some kinds of disinformation, said Cristina López G., a senior research analyst at the nonprofit Data & Society.

“Within the platform, that reduces reach,” López said. TikTok allows users to riff on each others’ videos by borrowing their audio or making “duets” (split-screen videos that show the original video alongside the new clip). López described the format as a “yes, and…” structure. If users want to amplify a message, they need to add their own voice, making it harder for anonymous trolls or bot armies to spread disinformation as quickly and efficiently as they can on Twitter.

So far, the audio-focused sharing has been harder to weaponize than text-based posts.

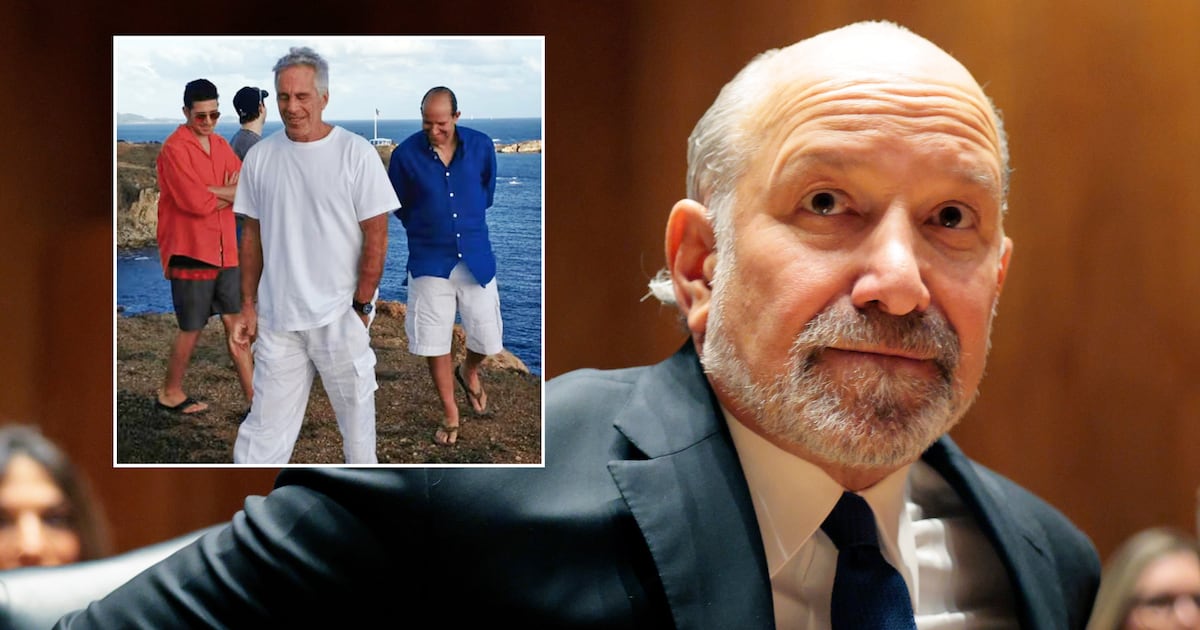

“The main building block for content on TikTok is sound. That poses some challenges for disinformation, making it less compelling,” López said. “I’m not trying to claim there isn’t any disinfo on TikTok or that it will remain impervious to it: if you type ‘epstein’ or ‘qanon’ on the search bar you’ll see that there is... some. But you’ll see that the sound meme is a challenge for producers of disinfo who are trying to create content in the same way they do elsewhere—their content doesn’t seem to go very far because of that.”

TikTok did not return a request for comment.

But TikTok’s closed nature could also make it more susceptible to hoaxes because content on the platform doesn’t link out to other sources, according to disinformation expert Cameron Hickey.

“Everything you’re experiencing, you experience inside the platform,” Hickey said. “In TikTok, in a sense, you’re locked inside.”

Other platforms have consciously tried to crack down on disinformation by limiting users’ ability to share posts. In response to social media-fueled killings in India, the Facebook-owned message service WhatsApp limited its sharing feature earlier this year. Previously, users could forward a message 20 times. Now they’re capped at five, in a bid to fight “misinformation and rumors.”

TikTok also has its own content moderation system. And like those of Facebook and Twitter, that moderation system comes with its own thorny ethical questions.

Some extreme keywords appear to be banned in TikTok searches. A search for Atomwaffen, a murderous white supremacist group, yields no results, but a warning that “this phrase may be associated with hateful behavior.” It’s unclear when TikTok rolled out the policy. A 2018 Vice investigation found pro-Atomwaffen material, although some hashtags associated with the group still yield search results. Similarly, “Hitler” surfaces no TikToks (and weirdly, no content warning) but “Honkler” a pointless-to-explain Nazi meme about clowns still turns up videos.

In November, a whistleblower told the German publication Netzpolitik that TikTok limits the reach of some videos by secretly labeling them as “visible to self,” (only the uploader can see the video) “not recommended” and “not for feed.” The latter two labels make it harder to discover a video via searches, or in TikTok’s powerful recommendation feed.

A leaked TikTok moderation guide from November included some common clauses (removing videos deemed to promote terrorism) as well as other, more controversial policies. Like YouTube, which tried to remove some conspiracy theories from its recommendations this year, TikTok has a policy against “conspiracy theories that put the public safety at risk, or that egregiously lack a factual basis” in a way that “can introduce real world harm.” (It lists “anti-vaccine propaganda,” “Holocaust denial,” and “fake bomb scare pranks” as examples.) The videos are allowed, but should be labeled as “not for feed,” the guide said.

TikTok’s structure makes it less vulnerable to outright hoaxes than it is misleading uses of actual news, according to Hickey, although understanding disinformation on TikTok is harder because of a lack of data. Hickey points to a TikTok video suggesting that Barack Obama buying a $14 million house on Martha’s Vineyard is proof that global warming isn’t real as exactly the kind of misleading videos that can have success on the platform.

“There’s nothing literally false in this, but there’s definitely a misleading statement,” Hickey said.

TikTok users can sometimes be pushed into producing more political content in their quests for a larger audience. Hickey said he’s witnessed users who rack up only middling numbers when they produce typical TikTok fare, like participating in viral dance crazes, only to see stratospheric engagement when they make a video playing on the “Jeffrey Epstein didn’t kill himself” meme.

Other TikTok policies pose further questions about the platform’s power over political speech. Videos “depicting controversial events” and “content that may lead to wider social division” including footage of political protests should be labeled “not for feed,” according to the leaked document. Those policies often line up squarely with the Chinese government’s interests. In September, amid suspicion that China-based company was hiding footage of mass protests in Hong Kong, TikTok was also revealed to be censoring videos about the 1989 Tiananmen Square protests, the Tibetan independence movement, and religious groups banned in China.

And sometimes TikTok’s emphasis on music can be a boon to the far right. In India, Hindu nationalists have found a huge TikTok audience with songs encouraging the seizure of property in Kashmir and misogynistic treatment of Kashmiri women, Vice reported this summer.

The TikTok audience skews younger, meaning it could be a key place for people hoping to confuse or mislead young voters.

“This is a place where they’re likely to be forming their political beliefs,” Hickey said.

While far-right conspiracy theories do not appear as prominently on TikTok as on other platforms, the app has a thriving mainstream MAGA scene. For members of this community, TikTok’s insular nature can create a kind of conservative filter bubble, The New York Times noted in an article declaring “TrumpTok” a “safe space from safe spaces.”

Massive and growing, TikTok could be a motherlode for disinformation agents who want to influence the 2020 election. But any hoaxes they pull off will have to look different than the copy-paste conspiracy theories that swamped Facebook in 2016.

“It would be naive to say that bad actors won’t find vulnerabilities on TikTok to exploit,” López said, “but what seems to be happening for now is that disinfo in the shape and form we’ve seen it in other platforms simply flops on TikTok.”